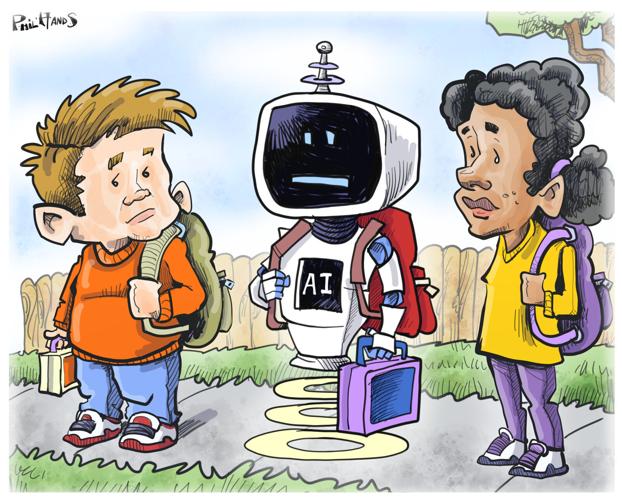

“I think, therefore I am,” philosopher René Descartes famously said in 1637. To think means to be alive. Learning how to think is why students go to school.

Liz Shulman

William Liang

As a high school student and teacher in different parts of the country, we believe education can still serve that purpose — but we’re worried.

Thinking was still a core value in 2023 when American education took the potential dangers of artificial intelligence seriously. Educational institutions signed contracts with AI detection companies such as GPTZero, ZeroGPT and Turnitin to deter students from cheating, and they adopted AI policies. They were concerned about academic integrity and critical thinking.

They knew the very thing that schools stand for — teaching young people to think, to become fully human — was under threat.

Two years later, under enormous pressure, schools sold out to AI companies, and students and teachers bear the cost. President Donald Trump’s executive order pressuring schools to integrate AI “into all subject areas” pretends it carries no risks.

People are also reading…

Despite evidence suggesting AI is killing students’ critical thinking skills, schools have signed contracts with Big Tech companies, allowing the technology to alter the educational landscape and shape what schools expect from students and educators, who have little say on the matter. The Center for Democracy and Technology estimates the percentage of schools with policies permitting the use of AI for schoolwork nearly doubled from the 2022-23 to 2023-24 school years, even though only 28% of teachers report strong guidance on what to do when they suspect prohibited AI use.

Big Tech’s multibillion-dollar pledges to bring AI into classrooms and communities hail AI’s ability to “democratize access” and “reimagine pedagogy.” Students are told to use these tools “responsibly,” even as no one can explain what this responsibility looks like.

The question is no longer whether AI belongs in education, but how efficiently schools can accommodate it without disrupting daily operations.

Character AI last fall cut off teenagers from its artificial intelligence chatbots, resulting in “tearful goodbyes to AI companions,” the Wall Street Journal reported.

Even the country’s largest teacher unions, the American Federation of Teachers and the National Educators Association, sold out to Microsoft, OpenAI and Anthropic. AFT signed a $23 million deal providing AI training to educators. One of that partnership’s first efforts is a “National Academy for AI Instruction,” where teachers learn how to use AI for generating lesson plans. The program plans to reach 10% of U.S. teachers over five years.

The justification is to put “teachers in the driver’s seat,” according to AFT President Randi Weingarten.

But in Texas, Arizona and California, students sit in front of screens for two hours a day while AI “teaches” them at a supposed accelerated and individually tailored pace, reducing teachers to classroom monitors.

We doubt these students are learning how to think for themselves when they’re sitting with chatbots that mimic thoughts.

Some AI proponents such as political analyst Van Jones even claim AI is revolutionary for equity and inclusion. Jones called AI “the closest thing to reparations (Black Americans) will ever get.”

If AI equalizes anything, it’s access to cheating and the outsourcing of critical thinking. Every day, we see students use it to cheat, whether it’s to write a science lab report, generate an essay draft or do algebra.

Fifty percent of students admit AI is hurting their relationships with their teachers, and over 70% of teachers worry AI is diminishing students’ critical thinking skills.

No matter how much money is spent to integrate AI into schools “ethically” and “responsibly,” students have learned the easiest way to complete any assignment is to outsource their thinking by cheating.

“I use it for inspiration,” many students say when teachers talk with them about forming their own original thoughts. “ It helps me organize.”

Many students ignore the barriers teachers put on assignments. Some educators use images of red traffic lights (no AI) and green lights (yes AI) at the top of worksheets. Students laugh at these paltry attempts to prevent cheating. We don’t blame them, of course, for becoming dependent on products marketed to them.

Other AI programs are less subtle. The “undetectable” AI assistant Cluely encourages users to “cheat on everything,” including tests and presentations. Perplexity incentivizes students to promote its products on college campuses by giving them money for each student who downloads Comet, its AI browser, and runs ads showcasing how its tool helps students cheat.

Even more worrisome than cheating on homework is the decline in students’ ability to think for themselves.

Why aren’t schools talking more about these dangers? Since the technology is here to stay, schools need better policies on its use. Students’ work needs to be monitored better, and teachers need to be supported in a return to paper and pencils and more work being done in class. If students cheat, at least they’re copying something that was human generated.

Schools need to better address these dangers before the consequences outweigh the positives. Big Tech may have the money that schools need, but schools shouldn’t sell their souls for the funding they deserve. Besides, public schools remain massively underfunded despite their contracts with tech companies.

The two of us value what school is supposed to be, and we think everyone should, too: It’s the place where you learn how to think and how to be.

Shulman teaches English at Evanston Township High School and in the School of Education and Social Policy at Northwestern University. Liang is a high school junior living in the San Francisco Bay area and a columnist at The Hill. They wrote this for The Chicago Tribune.