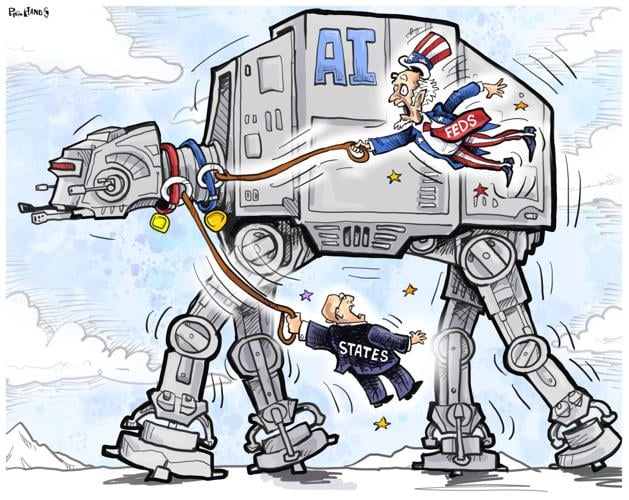

Congress has not enacted meaningful artificial intelligence legislation. Yet some in Washington insist the states should be blocked from legislating on AI.

This argument asks Americans to accept that the federal government is doing nothing to regulate AI, which is a failure of leadership.

J.B. Branch

The states often serve as the country’s first responders when new products begin affecting Americans before Congress responds. From consumer protection to automobile safety to labor laws, states have frequently moved first because they are closer to the public, faster to respond and better positioned to test practical safeguards. Artificial intelligence is no exception.

People are also reading…

The case for preserving state authority is especially strong because harm from AI is multiplying. Older Americans are targeted by AI-enabled fraud. Children, primarily young girls, are targeted with nonconsensual intimate imagery. Workers are being laid off by the thousands. Job hunters are affected by obscure AI systems that reject their applications without explanation.

Unsafe chatbots are linked to multiple people dying by suicide. Deceptive AI political content is threatening democracy with an incoming wave likely to hit the 2026 midterm elections.

Despite these threats, Congress has twiddled its thumbs. In the absence of federal leadership, states are doing what federalism was designed to permit — responding to harm to protect their constituents when Congress fails to do so.

The American public wants AI regulation. Nearly 97% of respondents in a national survey said AI safety and security should be subject to rules and regulations, including strong majorities of Democrats, Republicans and independents. More than 80% oppose federal efforts to block state AI protections, especially when children’s privacy and safety are at stake.

“The main thing is to keep the main thing the main thing,” said Stephen Covey, a renowned organizational consultant.

Moreover, the world’s largest AI firms are awash in money. NVIDIA alone is valued at more than $4 trillion. Apple, Google, Microsoft, Meta and Amazon each command trillion-dollar valuations and are armed with enormous legal staff, compliance teams, engineering capacity and lobbying operations. These are not fragile startups. They are multinational corporations.

In the U.S., big technology companies do not need to build 50 separate systems -- one for each state -- as they disingenuously argue. They build products to comply with the strictest major state standards, often California’s, and use that baseline nationally.

We saw this with privacy law after California enacted its Consumer Privacy Act. Many firms tethered their product standards to meet that law rather than make individual products for 49 other states. That is how large markets set practical compliance norms. It’s a normal business practice.

Big tech companies are not passive victims of legislation. They are deeply involved in shaping it. More than 3,500 federal lobbyists, one-fourth of all federal lobbyists, worked on AI issues last year. The number of AI-related lobbying relationships is rapidly increasing.

That lobbying extends well beyond Washington. In state capitals nationwide, major technology companies actively influence legislation. A perfect example is OpenAI’s controversial push to steer chatbot legislation aimed at protecting teens in California. The idea of state lawmakers bullying or blindsiding Silicon Valley with legislation is fiction. Big Tech is not only in the room when legislation is considered, it often is drafting the legislation with state legislators.

AI investment is booming. Data center construction is exploding. American AI companies dominate the top 50 richest tech companies. If regulation were crushing innovation, why do we see record market capitalization and infrastructure growth?

State regulation remains the only meaningful safeguard between Americans and a rapidly expanding set of AI-related harm. That makes state authority more important than ever. Congress will one day establish federal standards. Until then, blocking states would give AI companies broad freedom to test powerful systems on the public before protections exist.

The states were never meant to remain idle while harm spreads and Washington stalls. They were designed to act quickly. In this moment they should, because waiting is itself a policy choice, and increasingly, a dangerous one for our society.

Branch is the legal counsel for AI governance and technology policy for Public Citizen. He wrote this for InsideSources.com.